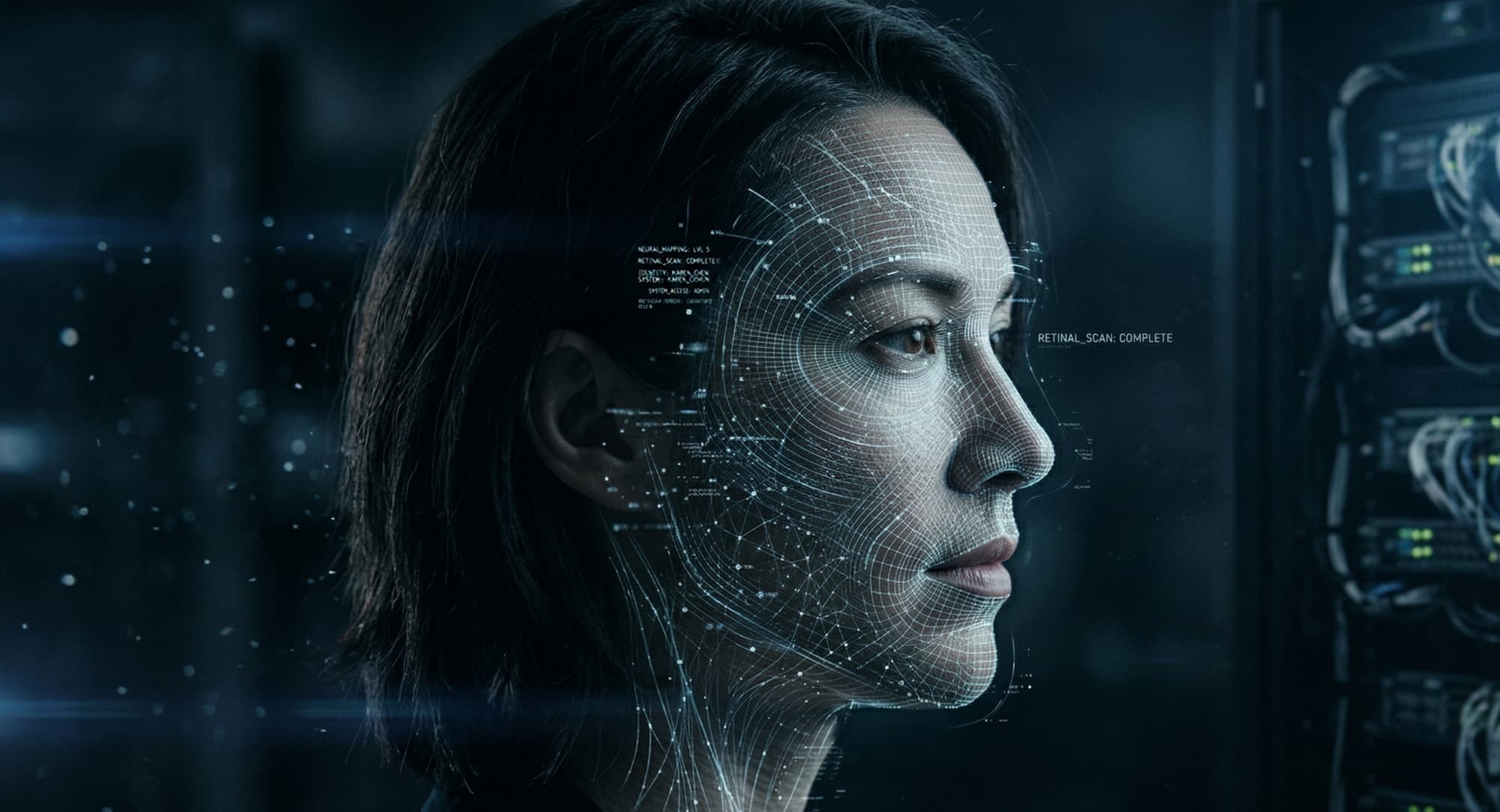

A peer-reviewed study published in Scientific Reports this year describes the deployment of an emotion-aware AI educational system that analyses facial expressions, speech characteristics, and textual responses simultaneously to infer a learner's emotional state in real time.

The system uses BERT-based models for text sentiment analysis and combines them with facial recognition and speech processing to produce a composite emotional assessment of the student. The goal is described as improving learning outcomes by adapting content delivery to the inferred emotional state of the learner.

The system achieves 70 to 79 per cent accuracy in labelling emotions from text. On speech-based emotion detection, it reportedly outperforms humans: machines achieve around 70 per cent accuracy in this area, compared to approximately 60 per cent for human assessors.

What Is Actually Happening Here

A student interacting with this system is simultaneously subject to three parallel emotional inference streams, textual, facial, and vocal, without necessarily being aware that the combination is generating a continuous emotional profile. The system does not ask how the student feels. It determines how the student feels, then acts on that determination.

The framing of this as beneficial, improving learning outcomes, is worth examining carefully. The inference is performed on the assumption that knowing a student's emotional state enables better teaching. That may be true in some respects. But the mechanism by which that knowledge is gathered, passive, multi-channel inference on a child or young person in an educational setting, is precisely the type of practice that the EU AI Act's Article 5 now prohibits in educational institutions across Europe.

The prohibition, which took formal effect in February 2025, covers AI systems that infer emotions from facial expressions, voice patterns, keystrokes, or body posture in workplace or educational contexts. The system described in this paper appears to fall squarely within that definition.

The Consent Gap

There is no mention in the published research of how student consent to emotional profiling is obtained, how data is retained, or what constitutional safeguards govern the use of inferred emotional data. The paper's framing presents the inference as a technical feature rather than a rights question.

This reflects a widespread assumption in affective computing research: that emotional inference is a form of observation rather than an act of data extraction. The distinction matters legally and ethically. Observation implies passive witnessing. Inference implies active derivation, constructing a model of a person's internal state from external signals they did not volunteer for that purpose.

HumanSafe Opinion

The following reflects HumanSafe Intelligence's position on this development.

Children in educational settings are among those with the least power to refuse, contest, or understand the emotional profiling they are being subjected to. A constitutional approach to education technology would require that any system touching a learner's emotional experience operates on the basis of explicit, voluntary declaration, not passive multi-channel observation.

The beneficial framing, improving learning outcomes, does not resolve the rights question. The question is not whether the inference serves a good purpose. It is whether inference on a child's emotional state, without their meaningful consent and without constitutional constraint, is the right mechanism at all. Good outcomes do not justify unconstitutional means. That principle applies with particular force where the subjects are young people in institutions where their participation is not freely chosen.

Sources

- A deep learning approach to emotionally intelligent AI for improved learning outcomes — Scientific Reports / Nature, 2026

- The Prohibition of AI Emotion Recognition Technologies in the Workplace under the AI Act — Wolters Kluwer Global Workplace Law & Policy, 2025

- Article 5: Prohibited AI Practices — EU Artificial Intelligence Act official resource